Hi

While trying to improve our server with profiler I've encoutered some memory issues that I don't understand.

1. the server is running in embedded mode

2. the static EntityManagerFactory (m_manager_factory in the example) is opened via

m_manager_facory = Persistence.createEntityManagerFactory(db_name)

3. After runnings some automatic tests for ca. 1 h all EntityManagers are closed and removed from memory (checked in the Profiler)

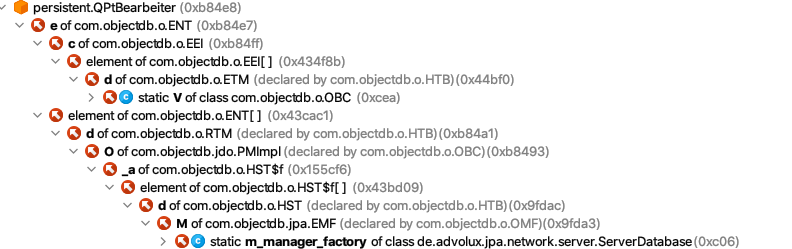

4. nevertheless there are lots of identical Copies of Entities in the memory (QPtBearbeiter in the example). There are only 8 entities of QPtBearbeiter in the Database but more the 236 Copies of those entitities in the memory (after closing all EntityManagers and of course running garbage collector)

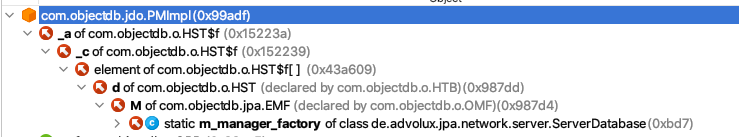

5. the gc root of all those objects is our single and static EntityManagerFactory Object (i.e. m_manager_factory) as you can see in the screenshot of the profiler.

First i thought it might be the L2 Cache or something simular but then i could not understand why there are 236 (as it seems idendical) copies of this Entity.

Of course other entities (but not all) are affectet which results in a slow but increasing memory consumtion of our server. With really big databases some customers told us that they need to restart the server after some ours (there might be some additional issues from our side).

Could you please give me a hint how we could avoid this behavior. I'v added a screenshot from the profiler.

best regards

Arne

PS.: upload seems not work for me. the png file is corrupted after upload (and download).

or does I miss something ?

can you please give me a hint how to proper upload the file?

PPS: thanks copy & paste works perfect